The next step is to calculate the entropy remainders from that total entropy after each attribute in the data set is processed and data is classified. We’ve already calculated the total entropy for the system above. I’m going to show you how a decision tree algorithm would decide what attribute to split on first and what feature provides more information, or reduces more uncertainty about our target variable out of the two using the concepts of Entropy and Information Gain. Nonlinear relationships among features do not affect the performance of the decision trees. In the context of Decision Trees, entropy is a measure of disorder or impurity in a node. Entropy is a measure of disorder or impurity in the given dataset. nothing but a variation of the usual entropy measure for decision trees. They can handle both numerical and categorical data. Decision tree learning is a supervised learning approach used in statistics, data mining and. They provide most model interpretability because they are simply series of if-else conditions. Entropy for split Gender Weighted entropy of sub-nodes (10/30) 0. Check it out, and continue reading to understand how it works. Decision trees can inherently perform multiclass classification. A decision tree is one of most frequently and widely used supervised machine learning algorithms that can perform both regression and classification tasks. Calculating information gainĪ quick plug for an information gain calculator that I wrote recently. This will result in more succinct and compact decision trees.

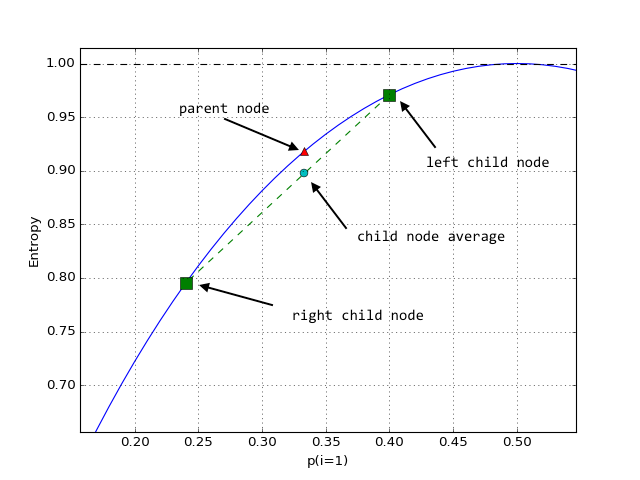

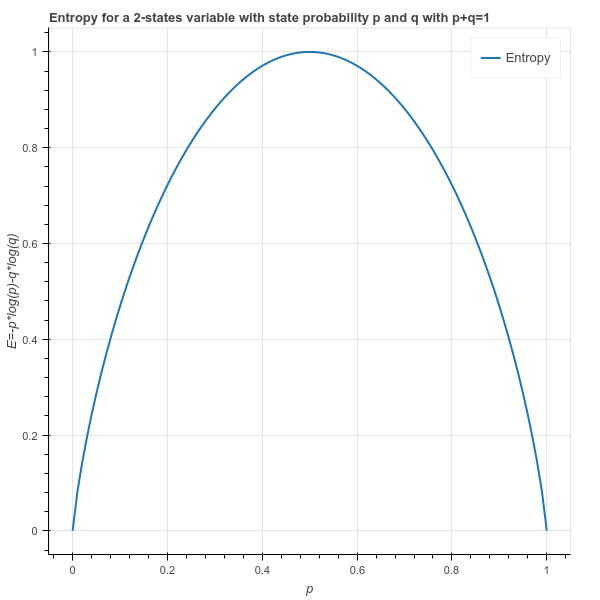

When building decision trees, placing attributes with the highest information gain at the top of the tree will lead to the highest quality decisions being made first. This information gain is useful when, upon being presented with a set of attributes about your random variable, you need to decide on which attribute tells you the most info about the variable. To reinforce concepts, lets look at our Decision Tree from a slightly different perspective. And hence in each split there will be reduction in entropy. For example, ID3 algorithm for classification uses information gain, an entropy. So in decision tree each split is based on reduction in entropy so that we can reach towards the less heterogeneous or more homogeneous node.So we split in such a way that after every split we get more homogeneous node so that drawing conclusion becomes easy. Taken together, the three sections detail the typical Decision Tree algorithm. Then various decision tree algorithms have been developed for classification. How is this useful when constructing decision trees?Įntropy is used when determining how much information is encoded in a particular decision. Third, we learned how Decision Trees use entropy in information gain and the ID3 algorithm to determine the exact conditional series of rules to select. So the total entropy for the variable Will I go running is a small amount less than 1, indicating that there is slightly less than a 50% chance that the decision to go running will be yes/no.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed